No More Vibe Coding: practice from Netflix’s Refactor Project on a Million-Line Codebase

No more slop.

“Don’t outsource your thinking.”

That line came up again and again at the AI Engineer Summit in NYC, and the most practical talk is from Jake, an ex-Netflix Staff Engineer, who walked through a project learning in Netflix and what it really means when you’re dealing with a million-line codebase. He shared a migration project in Netflix and how to make the most use of AI dev tools instead of vibe coding of a enterprise-level codebase.

It wasn’t about how to generating more slop as an engineer using Cursor or Claude Code.

It was about retaining ownership of understanding in a codebase where code generation is effectively infinite,

as a vibe coding senior engineer.

When vibe coding is not enough on a million-line codebase

On a codebase the size of Netflix’s—roughly a million lines of Java, with a main service around 5 hundred lines—“vibe coding” with an AI assistant simply does not scale. Tossing big chunks of code into a context window and hoping patterns emerge leads to three consistent failures:

The model can’t see the seams.

Authorization checks and business logic were woven together: permission checks inline with domain logic, role assumptions baked into data models, and AUTH calls scattered across hundreds of files. Any agent asked to “refactor to the new auth system” struggled to distinguish which parts were essential business rules and which were just historical implementation details.Accidental complexity gets re-encoded, not removed.

Instead of simplifying, generated changes tended to preserve old quirks, just translated into the new system. Legacy assumptions became first-class citizens in the new architecture, locking in the very complexity that needed to be shed.Context thrash replaces genuine understanding.

Dumping more files, more functions, more snippets into the context only made things noisier. The system would get a few files in, hit some hidden dependency or weird coupling, and spiral into confusion or produce half-coherent changes that were hard to trust.

Sitting in that conference room, it was obvious:

the limiting factor isn’t how much code an AI can generate;

it’s how much complexity the humans around it still understand.

Context compression as the real scaling strategy

Rather than trying to fit the system into the model’s context, the strategy flipped: compress the understanding of the system, not the system itself.

That meant turning millions of lines of code into a compact, high-signal specification—on the order of a couple thousand words—supported by real artifacts from the organization:

Architecture diagrams and design docs to define intended boundaries and flows.

Interfaces and data contracts to show how services actually communicate.

Slack threads and institutional knowledge to surface the unwritten rules and landmines.

Explicit requirements for how components should interact, what patterns must be followed, and what must never happen.

Instead of vague instructions like “migrate auth calls to the new service,” the output of this compression looked more like a surgical playbook:

Which components are in scope.

How data and control flow should change.

The precise sequence of operations needed to move safely.

Once that artifact exists, the AI stops being a speculative collaborator and becomes a very fast executor of a well-thought-out plan. The power moves from “ask it to figure it out” to “use it to implement what is already understood.”

A three-layer discipline: research, plan, implement

Jake shared how disciplined the workflow should be. It’s not about a magical technique; it’s about respecting the layers of understanding.

1. Research: compressing what is

Before any changes, the priority is to build an accurate mental model of the current system. That means:

Pulling together all relevant artifacts: diagrams, docs, code slices, logs of past incidents, even old Slack debates about why something was done a certain way.

Using AI as an analyst to map dependencies, describe how components relate, and hypothesize about behavior under failure, caching, or load.

Challenging that analysis with targeted questions—“How does this fail?” “What depends on this permission?”—and correcting mistakes.

The output is a single, coherent research document: what exists, how it’s connected, and what a change would touch.

The crucial part is the human checkpoint. Sitting there as an attendee, it was clear this is where the “don’t outsource your thinking” mantra lives. The document gets validated against reality. Misconceptions are caught early, before they compound into architectural damage.

2. Planning: deciding what should be

With a trusted understanding of the current state, the next layer is a detailed implementation plan, not just a wishlist:

Concrete code structure: modules, files, interfaces, and boundaries.

Function signatures, type definitions, and data flow spelled out clearly.

Edge cases and constraints captured explicitly, so the AI has no room to quietly invent behavior: fallbacks for example.

The bar is simple: a junior engineer should be able to follow the plan line by line and have the change come together correctly. That doesn’t mean the work is trivial; it means the thinking has already been done at the right abstraction level.

This is where years of scar tissue from production incidents really matter. Decisions about where to draw seams, how to isolate complex logic, and which patterns to avoid are made here, up front, before a single new line of code is generated.

3. Implementation: executing what’s already understood

Only once the research and plan are solid does the implementation phase begin. At that point, AI becomes a force multiplier, not a co-architect:

Agents can generate the mechanical parts of the refactor in the background.

Reviews focus on conformance to the plan, not deciphering novel structures the model invented.

Instead of 50 meandering interactions filled with evolving code, a small number of focused generations align tightly with the agreed architecture.

The net effect: complexity doesn’t accumulate by accident, because the system’s evolution is grounded in artifacts that humans can read, critique, and revise quickly.

Earning the right to automate: the manual migration

One of the most important insights from Jake about their Netflix auth story is that this workflow only works after the team has earned enough understanding.

For the authorization migration, a key inflection point came from doing a full migration manually—no AI, just engineers reading code, changing it, and watching what broke. That painful, hands-on process surfaced:

Hidden invariants in the old auth system.

Services that would silently fail or misbehave if certain assumptions changed.

Edge cases that no automated code analysis or superficial reading would have revealed.

That manual PR then became a teaching artifact:

A concrete example of what a clean migration actually looks like in practice.

A grounding reference fed back into the research and planning phases for subsequent entities.

Even with that template, every entity had its own quirks: encrypted fields versus unencrypted ones, slightly different data shapes, legacy variants that had to remain intact. AI was used iteratively:

“Given this example, how would this other entity be migrated?”

“What changes when this field is encrypted?”

“What breaks if this contract changes?”

The message landed strongly in the room: there is no spec-writing trick that substitutes for earned understanding. The three-phase process is powerful, but only after the team has walked through at least one end-to-end change the hard way.

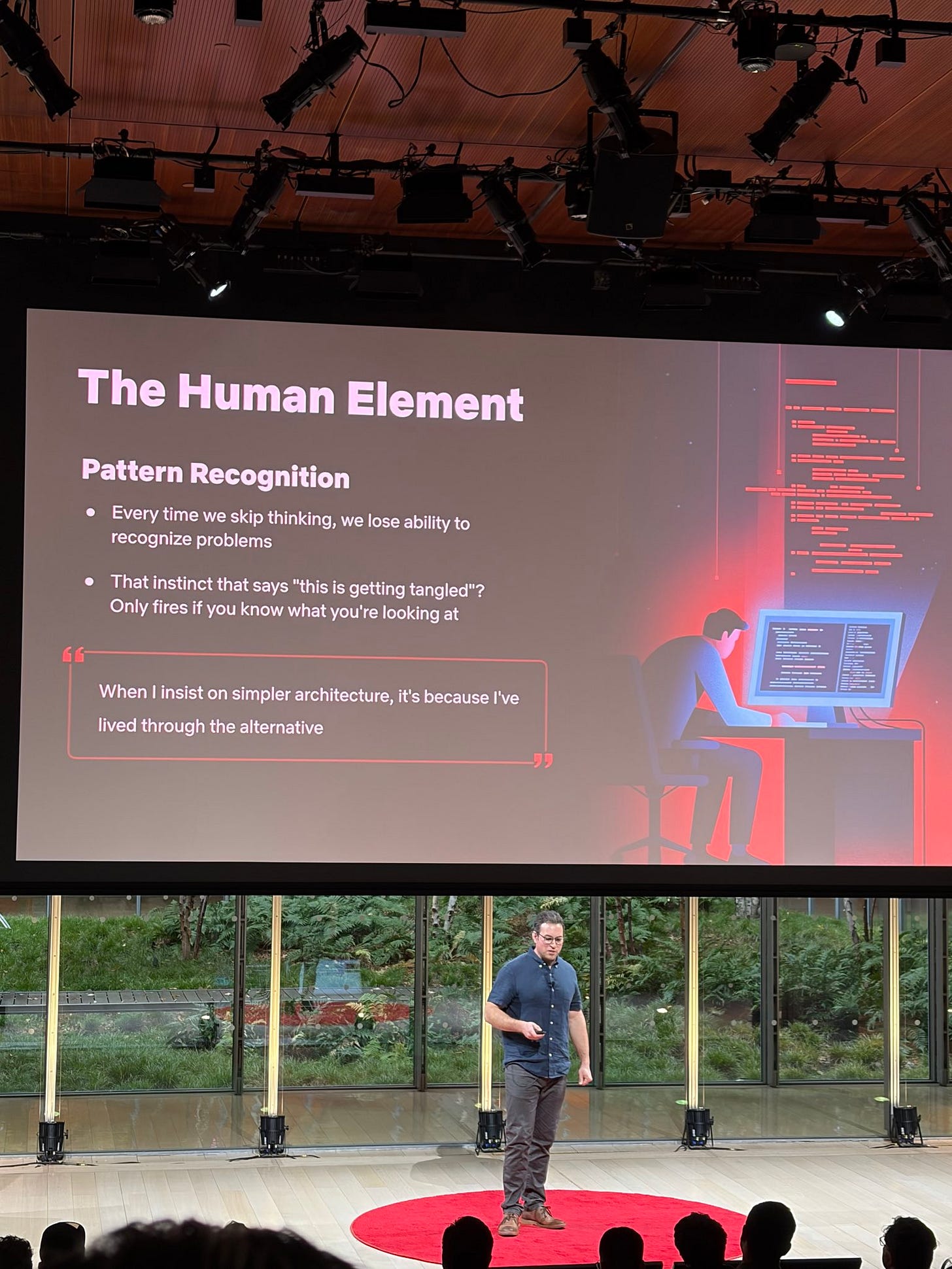

The real risk: losing the ability to see trouble coming

A lot of AI discourse focuses on whether generated code “works” or passes tests. Jake pushed a different concern: what happens to our judgment when code is cheap and instantaneous?

If thousands of lines can appear in seconds, but understanding them takes hours or days, there’s a real asymmetry:

Complexity can grow faster than anyone can track.

Subtle coupling can slip in quietly, behind green test runs.

The instinct that says “this architecture will wake someone up at 3am in six months” starts to dull if no one truly understands the system anymore.

Pattern recognition in software—knowing when something smells wrong—isn’t just pattern matching on code shapes. It comes from being the person who had to maintain the last overengineered solution, clean up the last cascading failure, or debug the last “clever” abstraction. AI has none of that context; it encodes patterns, not regret.

The research–plan–implement discipline is, in a sense, a way to keep that instinct alive:

Research forces articulation of how the system really behaves.

Planning forces explicit, reviewable decisions.

Implementation stays tethered to those decisions, instead of chasing whatever the model thought looked plausible.

The question that lingered for me, sitting in that audience, wasn’t “Will AI write our code?” That’s basically settled. The sharper question was:

When AI is writing most of the code, will we still understand our own systems?

Takeaways for engineers building with AI

A few durable lessons from this session at the summit:

Use AI to accelerate execution, not to dodge understanding.

The more critical the change, the more important it is to own the mental model yourself.Invest in context compression.

Specs, diagrams, research docs, and example PRs are not bureaucracy; they’re how understanding becomes portable and reviewable at AI speed.Do at least one major migration manually.

Especially in complex legacy systems, that first painful refactor is where the real constraints reveal themselves.Add human checkpoints as non-negotiable.

The faster code is generated, the more vital it becomes to slow down for architectural review and reality checks.

The future isn’t about who can generate the most lines of code with the flashiest agent. It’s about who can keep seeing the seams in a system large enough that no single context window—or single human—can hold it all at once.

“Don’t outsource your thinking” sounded like a slogan going into the summit. After this talk, I felt it’s more like a survival rule.

“No more slop.”